Déjà-vu

The young man was drafted against his will. Ordered to hunt down the same guerrilla fighters who were his comrades just months before.

Now, he found himself deep in the wilderness doing practice drills with his new squadron when, suddenly, he spotted rustling in the bushes. His heart pounding in his throat, he saw a formation of camouflaged guerrillas looming with AK-47s ready to burst. Instinctively, he raised his rifle, flipped the safety and aimed.

“Don’t shoot.”

A hand on his shoulder.

“It’s just a boy.”

He lowered the rifle, looked again, and was dumbfounded by what he saw: a ten-year old boy herding cows. Not with an AK-47, but with a stick.

The soldier shared his story with neuroscientist Lisa Feldman Barrett, who recounts it in her latest book. He was desperate to understand how he could have mis-seen what was right in front of him and nearly killed a child.

“What is wrong with my brain?”

There was nothing wrong with his brain. It had worked exactly as it should have.

The red pill

How would you react if someone told you your day-to-day experience is, in fact, a carefully controlled hallucination?

This would sound familiar if you've seen The Matrix. But what if this was science’s version of your reality?

One of the great emerging theories in neuroscience is predictive processing. It asserts that the brain predicts large swaths of experience from memory before it happens, rather than construct it from what you see, smell, hear and feel as it happens. This saves energy that can be focused as attention on new, uncertain elements in the environment.

The soldier’s brain predicted guerrilla fighters from matching earlier experiences so he could react quicker. If it really had been a guerrilla, the seconds he won predicting the scene rather than reacting after fully perceiving could have saved his life. The prediction was wrong but killing the child as a result — tragic as it would have been — wouldn’t hurt him biologically. The predictive brain wins at natural selection with metabolic efficiency.

Contents

This post looks at how brain and body construct how you experience yourself within the world in every wakeful moment.

Experience is a real-time product of many different parts interacting together in a system too complex to finely model. Instead, the focus is to simplify without straying from scientific lines. We're stepping outside the system to see its mechanics at work and identify leverage points. So we can be more effectively intentional about what we want to improve.

-

This first part lays the neuroscientific groundwork with the brain's central principle of predictively processing experience for metabolic efficiency.

-

Part 2 maps how different experiential elements interact within the predictive model: emotions, intuition, attention, thought, memory, imagination, self, awareness and action.

-

From this sketch, Part 3 explores how to improve relationships to our selves, others, and goal-oriented work.

Sense chaos

From the day you’re born to the day you draw your last breath, your brain is trapped in a dark, silent box — your skull. Blind and deaf, it depends on data collected by sensors to know what's going on inside its body and the world outside:

-

Extero-ceptive sensors — Eyes, ears, nose, skin and tongue bring in sense data from the world around.

-

Intero-ceptive sensors — Neural pathways bring in sense data from within the body.

Sense data isn't actionable. It doesn’t come in as meaningful sights, smells, sounds and touches, but as random scraps of light waves, chemicals, vibrations and air pressure changes. It's reality rendered as numbers without intrinsic meaning: objective and quantitative.

The brain’s job is to interpret sense data in context of the environment so it can keep you alive and well, i.e. avoid you falling down the stairs, getting dehydrated or becoming lion lunch. In context, data gains meaning and becomes information.

As per information theory pioneer Claude Shannon, information “resolves uncertainty”. In doing so, it informs agency: your brain knows what to do next.

-

Data — My body records a drop in temperature and increasing friction from wind. Information — My brain interprets the change as a sign of bad weather to come. Action — I put on a rain jacket.

-

Data — Eyes record image of a lion near the river. Information — I’ll be in danger if I continue my path. Action — I head to another water source.

Past resolves present

The brain faces an inverse-inference problem: how does it figure out what's going on (causes) from random data (effects)? It solves this by drawing from its lifetime database of past experiences: memory.

Inside the skull, the brain asks itself what happened last time it was in a similar situation. "What are these wavelengths of light most like?" The answer need not be perfect, just close enough to inform effective action.

Chaos becomes structured and navigable through mental maps modelled from past experiences. We make sense of new things through what we already know. Inversely, we can't make sense of data we don't have mental maps for.

For example:

-

When you look at a rainbow, you see distinct bands of colour. In nature however, a rainbow is a continuous spectrum of light — it doesn't have any bands. You see bands because your brain projects colour concepts onto the sense data that neutralise the variations within each colour category.

-

Human speech is a continuous stream of sound. Yet, you intuitively make out words hearing a language you know. It's because your brain has learned patterns it can project back onto speech streams to structure it. Inversely, a language you don't know sounds like random gibberish.

The Beholder's Share

Take a look at these three visuals. You probably see fairly meaningless black lines. Go read the descriptions below and look again. The lines form familiar scenes now. Not because they changed, but because your brain has.

-

POV of ski jumper with spectators.

-

Inside-view of Napoleon's coat.

-

Ship too late to save drowning wizard.

Reading these entries made your map brain draw mental maps of meaning it then projected onto the otherwise random visual data. Making the lines mean something.

The conceptual artist Marcel Duchamp famously said an artist does only 50% of the work in creating art. The other 50% is done by the viewer's brain.

“The creative act is not performed by the artist alone; the spectator brings the work in contact with the external world by deciphering and interpreting its inner qualifications and thus adds his contribution to the creative act.” — Marcel Duchamp

Mental LEGOs

Such pattern-matching follows from the brain’s capacity for abstraction. Lived experiences are not so much stored as unabridged memories, but rather compressed into concepts: LEGO-like conceptual building blocks that can be mixed and matched across contexts.

This how you intuit flowers, watches and wine as “gifts” even if the objects look nothing alike. Different realities with the same meaning in a particular context. Inversely, the same reality gains different meaning in different contexts: a glass of wine can mean blood of Christ, a toast and a cosy evening with friends.

**Abstraction summarises physical realities to their functional features. **How something looks, sounds and smells is abstracted away; stripped down to what it can do in a given context. They become pieces of meaning for the brain to recombine in novel ways and project onto other realities so it can pilot them quicker.

-

Flowers, wine, watches can all be "gifts" in festive contexts.

-

Umbrella, coat, plastic bag, car, apartment and a mindset to not care can all "protect from rain."

-

Salt, barley, little shells, copper coins, paper notes, mortgages, Bitcoin have all been used as "currency" in context of transacting goods and services.

Contextually integrating concepts abstracted from past experiences is how you intuitively know what to do in situations you've never encountered before — without having to think about it. Memory bits are integrated into functional mental maps to resolve present ambiguity for action.

Abstraction also unlocks imagination: mental modelling that builds on top of memory. The brain playfully remixes mental LEGOs into possible scenarios for the future. It's what orients creativity and pulls attention in a certain direction.

"What you can imagine depends on what you know." — Daniel Dennett

Prediction beats reaction

Scientists used to believe sense-making happened reactively bottom-up. Data moves in through senses and travels up the nervous system into the brain where it gets interpreted through the lens of memory so we can act. Senses record, brain processes, body re-acts — in that order.

Such an input-reaction sequence seemingly matches how we experience the world. For example, the brain's visual system feels like it works like a camera: eyes record visual information and the brain processes it into an image. Hence, the soldier believed something was wrong with his brain. How else do you explain mis-seeing a boy herding cows for guerrillas with machine guns?

Analysis gets you killed

The picture changes in the face of survival. Remember, the brain's job is to keep you alive and well. The constant influx of ambiguous data is cognitively overwhelming. If the brain were to reflexively interpret all of it from the ground up, it'd drown in uncertainty and be (too) slow to act. The soldier would get shot before he'd understand he's in danger.

To avoid analysis paralysis, the brain takes a shortcut. Rather than process all the sense data, it pattern-matches with memory to predict what is most likely to happen next. "Last time I was in a situation like this, what did I see, hear and feel next?" That prediction is constructed as experience.

Experience ⇄ Expectation

The soldier mistook the child for a guerrilla because that's what happened next last time the bushes rustled when he was: (1) in the woods, (2) with his comrades, (3) holding a rifle, (4) his heart pounding.

From memory, the brain (1) meaningfully structures random data and (2) fills in blind spots to proactively create a meaningful experience that guides action. How you see the world is not a photograph, but a construction of your brain so fluid and convincing that it feels like it is. Lightwaves, air pressure changes and chemicals are predictively constructed as objects, sounds, tastes and smells based on what the brain believes most probable from past experience.

Flipping Pavlov

In case it hasn't fully sunk in yet: prediction is to be taken literally. Yes, you sense changes before they actually happen.

Most of the time predictions are accurate and match reality. You look at cows and see cows. But have you ever seen a friend's face in a crowd when they weren't actually there? Ever sworn your phone vibrated when it didn't? You really did see and feel those things. They were sensory anticipations then invalidated by reality. Prediction errors.

Same is true for experience of your body. Internal changes are sensed before the relevant data from organs and hormones arrives.

-

When you're thirsty and drink water, that thirst seems to subside at once. In reality, water takes about 20 minutes to hit your bloodstream.

-

Turns out Pavlov's dogs didn't drool as a reaction to the bell, but rather (their brains) anticipated the experience of food from memory of the bell and prepared the body for eating. Similarly, if you'd imagine your favourite food right now, scans would detect increased activity in brain regions associated with taste and smell — which trigger salivation.

Prediction errors

At any given time, the brain experientially represents what it believes is going on in body and world, and, from there, computes what is most likely to happen next.

It does this according to Bayes' theorem, which describes the mathematical probability of an event from prior knowledge of variables related to that event. Each time a prediction proves true in a context, the brain attributes a higher probability to it and is more likely to re-project it in the future.

Every once in a while however, incoming sense data unexpectedly contradicts the prediction. Guerrillas turn out to be cows and the friend isn't really there. The brains corrects the experience, our attention suddenly shifts and we feel surprised. We learn: reality forces the mental map to update. The correction will inform more accurate predictions going forward.

The brain works much like a scientist. It continuously makes and simulates hypotheses, then tests them against data:

-

In the face of inevitable prediction errors, it can be a responsible scientist and change its models to match data or it can be a biased scientist and selectively filter or even ignore data to fit its hypotheses.

-

In moments of learning, it can be a curious scientist — focussing almost solely on input to enrich and update its models.

-

Like Einstein, it can daydream and imagine — simulating the world from its mental models and concepts alone.

While intention and habits matter (see Part 2 and Part 3), your brain's scientific style is first subject to its bodily resources. Its main job is to keep you alive and well, to make sure your biological needs are timely met. So when you're running low on glucose, dehydrated, sleep-deprived or in danger, the brain will spend less energy on processing sense data. It will go with its predictions without checking for errors, because it is the shortest path to replenishing its bodily balances.

Metabolic efficiency

Brains predict and then correct rather than detect and react because it reduces uncertainty and costs less energy. Reactive brains would have to compute everything on the spot from scratch every single moment: too ineffective and inefficient to be naturally selected.

Predictive brains follow Karl Friston's free energy principle, which says that organisms aim to minimise energy use so to maintain homeostasis: a state of internal biological balance.

Body budgeting

The brain carefully budgets bodily resources (salt, glucose, water) to succeed efficiently in any given context. It continuously makes predictions when to (1) deposit, (2) withdraw or (3) invest bodily resources given the situation. For example: can we relax, rest and replenish (deposit), should we release cortisol to ready the body for action (withdraw) or is it a good time to work out so the body grows stronger (invest)?

Predicting known situations saves energy to spend in unknown, potentially dangerous, situations. When it's unsure what will happen, the brain is more likely to predict threats and allocate resources accordingly, e.g. pumping the body with cortisol to prepare for flight.

Such threat predictions more often turn out false and the energy wasted — as was the case for the soldier — **but the spend probabilistically makes sense for survival. **You're rather safe than sorry — or dead.

In fact, the brain's readiness to check its predictions for errors against sense data is yolked to heart rate. The faster the heart pounds, the more stubbornly the brain will insist in its predictions to construct experience — wilfully ignoring all invalidating sense data. You see shepherds for guerrillas and sticks for AK-47's in a real-life hallucination.

The map predicts the territory

Predictive brains can move faster through territories using maps they already have rather than constantly drawing new ones on the go. By assuming known parts of the territory, the brain saves energy it can focus as attention on new, uncertain parts — those we are not sure to be safe yet.

Of course, as the saying goes, the map is not the territory. But it doesn’t have to be: it just need to approximate the territory well enough to be practical. That point about utility is often left out of Korzybski's famous quote. When the map errors in the face of utility and the territory hits us in the face, it is forced to update so the brain can predict better next time.

“A map is not the territory it represents, but, if correct, it has a similar structure to the territory — which accounts for its usefulness." — Alfred Korzybski

Context matters

Crucially, the map is bound to the territory. Human truths (i.e. mental maps) are contextual based on utility.

What is true in some situations might not be in others, which is what we mean when we say "it's relative." Different contexts have different truths and require different mental models to navigate effectively. When we predict from an old map in a new territory, we trip and fall: prediction errors. The brain learns and fills in the blind spots.

As trial-and-error adapts mental maps to mirror differences across territories, they become dynamic and context-independent. Predictions from these models are true in a greater variety of contexts, i.e. they have greater reach and are more probabilistically sound.

Novelty costs

The free energy principle implies the brain innately avoids novelty for as long as it can afford to.

Because correcting internal models is metabolically costly, uncertain or contradicting data is purposefully left out in constructed experience. The brain doesn't predict what it doesn't know and it doesn't map what it doesn't have to, to save energy. You never see the full picture of reality, only the sliver the brain has learned about in the past.

*"We all think we know how the world works, but we've all only experienced a tiny sliver of it." — Morgan Housel

Learning ROI

The brain only corrects its models if the future risk of not doing so warrants the cost. Metabolic investments need to pay off.

-

If your model predicts that lions are friendly and one almost bites off your arm because you tried petting it at the zoo, your brain will update the model so you are more careful next time.

-

People who believe they don't need sun-screen only do so until they get severely sun-burnt.

-

Certain behaviours work better in some socio-economic contexts than in others. For example, kids "too cool for school" typically have their habits of partying, bullying, substance abuse, vanity and arrogance work against them in adult life later on. Their brains have to remodel for the sake of well-being. The longer it takes, the more dysfunctional it becomes.

We predict what the world will be like based on what we already know and in return the world adjusts those predictions by feeding back where they're wrong.

However, updates cost energy and so the brain by default avoids being proven wrong, sticking to what it knows. Here we may find a root of addiction: it's the brain avoiding the struggle of change by escaping in an easy distraction. (Part 3 further explores addiction and its opposite — personal growth.)

To learn beyond what is biologically necessary therefore takes intention, a willingness to expose our brains to information that is new or conflicting. You need to actively seek to be proven wrong by confronting your assumptions across contexts.

Experiential blindness

If your brain doesn't have concepts to anticipate and interpret sense data, you are experientially blind to it.

-

Unless you already had concepts, the visuals shown earlier looked like meaningless black lines at first. Once you were handed concepts, you saw something in them. The visual stays the same, it's your brain that changed and now adds its prediction.

-

A spoken language you don't know sounds like gibberish. Once you learn the language, the same sounds you didn't know what to make of earlier all of a sudden gain meaning.

-

Some people that are born blind can gain eyesight through operation. Still, for days, weeks or even months they remain blind to parts of reality because the brain doesn't know how to experientially map them.

We all see the world through our unique prisms, modelled after the unique mosaic of (1) our own lived experiences and (2) those of others we are subjected to through relations and media (movies, books, timelines).

And so two people looking at the same reality can come to wildly different conclusions about it. The more different the information ecologies they've lived in, the more different their conceptual maps and the more they'll struggle to find common ground. They're experientially blind to each other.

It explains why conversations about politics and religion are mostly dead on arrival. These are games of language with no cost to being wrong. Each participant wants the other to see what they see and can simply keep arguing from the different concepts modelled from their different pasts, since there are no risks for well-being and no prediction errors. Not changing your mind is the path of least metabolic resistance.

Empathy can bridge the gap by assimilating each other's concepts in open dialogue — enriching them in the process. But it only works if the intention is to learn each other's concepts rather than to be 'right', other than the humility to acknowledge the inherent subjectivity of one's point of view. (Part 3 dives deeper into empathy).

Echo chambers

Each time reality doesn't correct predictions, the underlying models are judged effective and assigned a higher probability for future prediction. Predicting present from past, thinking patterns self-reinforce and become habitual over time. We naturally see what we want to see, hear what we want to hear, and believe what we want to believe.

In nature, misguided predictions cost you and so you must change your mind to survive. In modern environments however, most risks have been designed away so being wrong doesn't cost you — at least not biologically. When predictions don't occur or are easy to rationalise away, you can be very wrong for a very long time and be perfectly fine throughout. Naturally selected for metabolic efficiency, brains are wired to get stuck in echo chambers in risk-free human contexts.

From sensation to prediction

Baby brain development demonstrates how the predictive mechanics are tuned by mapping bodily sensations (emotions) to environments. It makes for a good summary before moving on.

A new-born baby's experience is pure sensation. They're overwhelmed by sense data they don't know how to deal with because they have no memory to predict from. Their world is uncertain and chaotic.

As the baby crawls, grabs and plays, its brain maps sensations to the environment and, through trial and error, learns what it should and shouldn't do. Thanks to sensory feedback, it learns that the couch is soft, the floor hard and the dog kind.

When sensation doesn't resolve uncertainty, the baby turns to mom and dad. When they are calm, the baby calms too and thus learns to be calm in said situation. Parents regulate their baby's emotional state with soothing touches, looks and sounds.

This is why caregivers are crucial to baby brain development. By contextualising sense data, they help the baby form its first mental maps to predict from. These maps make the uncertain navigable so the baby brain gains control and agency. As the empowered baby crawls through new territories, prediction errors refine its models and abstraction of patterns compounds its mental LEGO library.

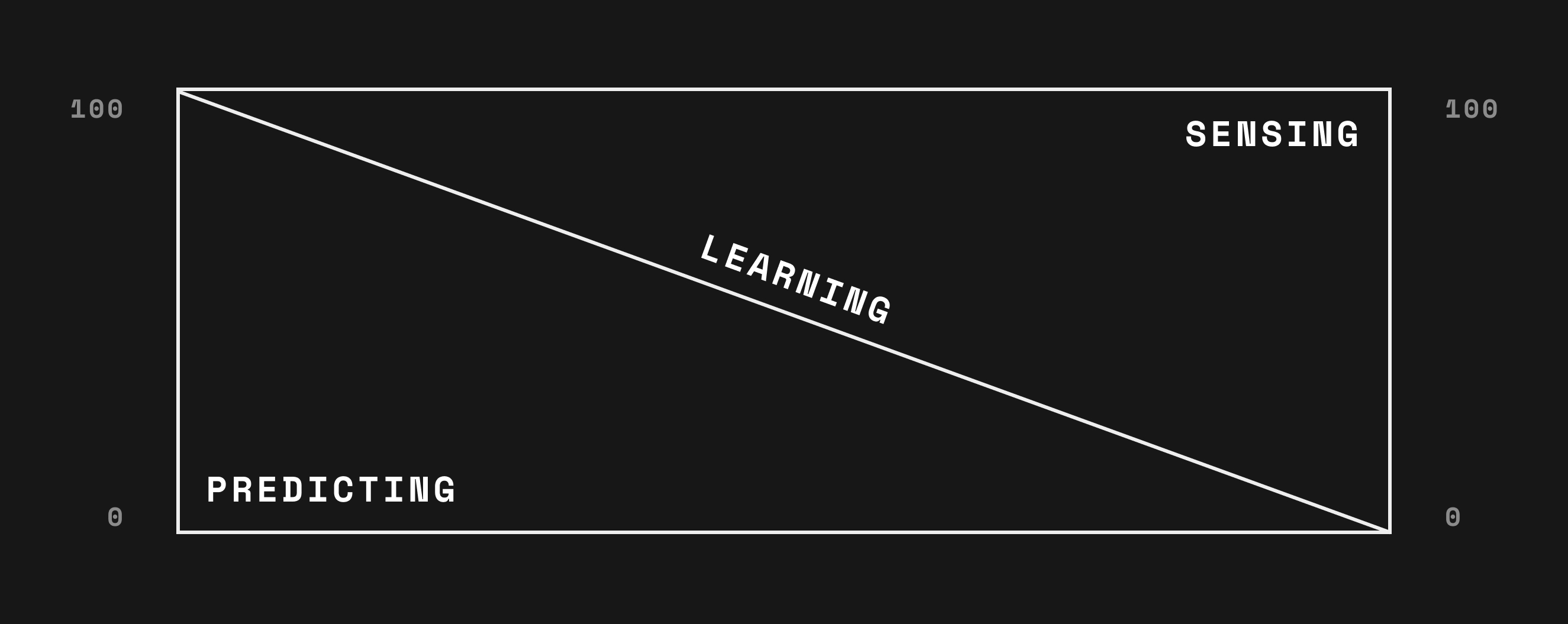

As it ages, the brain typically predicts more and more while sensing less and less. After a while, all surrounding predictions errors have been learned and internal models stop being challenged. At that point everything is expected and nothing feels exciting. Wisdom from across the ages in fact suggests we start to feel old not so much because of age but because we stop discovering and learning new things. Curiosity is the anti-aging cure.

"Once you stop learning, you start dying." — Albert Einstein

"We don't stop playing because we grow old, we grow old because we stop playing." — George Bernard Shaw

Not having memory to predict from is one reason babies cry and sleep so much. The constant stream of ambiguous sense data and prediction errors exhausts the brain. In contrast, adult brains smooth-sail in familiar environments because every detail can be predicted without a cognitive sweat. But, like babies, they too tire quickly in unmapped exotic environments, as happens with culture shock.